This week, I made my initial commit to the GitHub repository I’ll be using for my Webliographer project.

The purpose of Webliographer is to collect and manage web references, otherwise known as links or URLs. This initial commit focuses on importing links from various file formats, and exporting a table of link information in TSV (tab-separated value) format.

Text File UI

Webliographer is a .NET command-line program. This type of user interface takes less time to develop than a graphical UI, but it can also make program functions less discoverable. So rather than rely only on command-line arguments, I decided instead to read commands from a text file. The reason: As I was testing the program, I found myself running the same commands repeatedly. To remember which commands to run, I would store the command syntax in a text document and paste the commands into a console window. So why not just have the program read from a text file directly?

The advantage of a command text file is that it takes advantage of the full functionality of your favorite text editor. Commands can be copied, pasted, and modified as needed. It’s faster than pointing and clicking in a UI, though it does require a command-line way of thinking.

To interpret the command file, I wrote a bare-bones parser that reads the file and looks for one or more commands in a section of the file between the delimiters ExecuteStart and ExecuteEnd. Each command has a unique name and zero or more parameters, each on a separate line. Comments (lines that start with #) are ignored. Another section of the file (between LibraryStart and LibraryEnd) is ignored completely, so it can store commands that aren’t currently being used. Commands from this section can be copied and pasted into the active section when needed.

I wrote about the concept of a text file UI last year in Time Tortoise: Text File User Interface. However, Time Tortoise in its current form relies mostly on a graphical UI.

Importing Data

Webliographer needs to get its link data from somewhere. I have started by writing functions to import from a few different file formats:

- Google search results in JSON format: I was able to get Google search results in this format, which captures information about a Google search query and each of the search results. I use JsonConvert.DeserializeObject to turn the JSON into a C# object graph for further processing.

- Links in minimal TSV format: Each line in this minimal format has just a page title and a page URL, separate by a tab. It’s easy to create in a spreadsheet and export to a file. No special parser is required to read this format in C#.

- Links in a Markdown document: I wrote this importer so I could extract the links from the Awesome Competitive Programming List. Rather than a full Markdown parser, I just use a regular expression to look for links on each line.

Storing Data

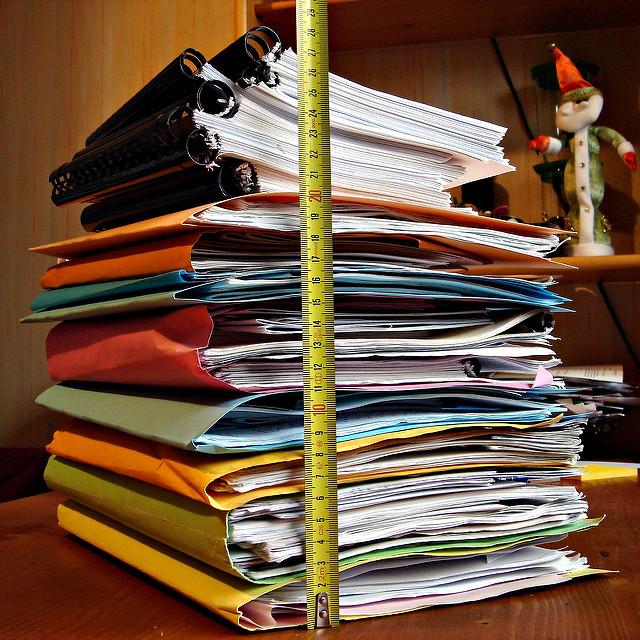

I don’t yet have a database up and running, so for now I’m storing my links in a master TSV file. Webliographer has a function to import multiple TSV files, consolidate any duplicates, and write out the result in TSV format. This master TSV can then be opened in a spreadsheet program for inspection. Once a database is available, I will import the master TSV and start using the database as the source of record.

For now, the master TSV contains the link title and URL, plus the domain where the page resides, and a rank (relative position).

(Image credit: Alexandre Duret-Lutz)