Last week, I wrote about using search engine results to collect links to import into Webliographer. I used three search engine features: standard search, standard search with duplicates included, and site-specific search. Each of these features had pros and cons: Standard search returned results from more domains, but with fewer results per domain. Standard search with duplicates included reduced the number of domains, but returned more results from some domains. And site-specific search returned many results from a single domain, but not all of the results from that domain. All three techniques enforced a seemingly arbitrary limit of several hundred results. This week, I’m going to use a different technique for getting results from a single site.

All Questions

Search engines are optimized for relevance over result quantity, since most users are looking for a quick answer, not a comprehensive list of every reference on a topic. So compared to a simple search, it requires a bit more effort to obtain a comprehensive list of references.

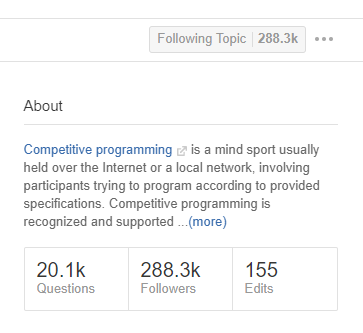

As I mentioned last week, Quora reports that it has over 20,000 questions in the competitive programming topic. The Google site-specific search for Quora only gave me about 600 unique links. I wanted to see if I could find the rest of them.

The All Questions page for a Quora topic is an “infinite scrolling” page. Of course, it doesn’t actually scroll forever. After a few minutes of scrolling, I got to the point where I had to click a “More” button to keep getting results, and eventually to the point where no more questions scrolled into view. I saved a local copy of the result, about 1.8 MB of HTML and embedded JavaScript.

To extract the list of questions from the saved page, I used a .NET library called OpenScraping. It provides a set of methods that use a JSON + XPath configuration string to parse an HTML document. For example:

{

'questions':

{

'text': './/a[contains(@class, \'question_link\')]'

}

}

This string tells the parser to find every <a> tag with a question_link class. This happens to be the way question links are identified on the All Questions page. Additional XPath code can be used to get the href part of the tag, which is the question URL.

OpenScraping uses the JSON instructions to parse an input HTML document and return the results in JSON. In this case, it returns a list of questions, with the question text and the question URL for each one. It’s easy to output this data to a TSV file, which Webliographer can merge with the results obtained last week through Google search.

Missing Questions

Despite the promise of “20.1k” questions, the process described above only retrieved 2220 questions, less than 12% of the advertised total. However, that is more than three times the number of questions returned through Google site-specific search.

Are there really over 17,000 questions not accounted for on the All Questions page? One indication that the page doesn’t have a complete set of questions: when I merged the All Questions list with the Google search list from last week, I got 2756 unique URLs, or 536 more than what was found on All Questions. So it’s clear that All Questions is not the only place to look for questions.

Page Parsing

With a large number of questions to sift through, it’s useful to have some ranking system to identify those that Quora users have found to be more interesting. For the questions retrieved from Google search, the natural ranking is just the order of the search results, since the search algorithm returns results in relevance order.

But the All Questions list seems to be biased in favor of newer questions, with older questions mixed in seemingly arbitrarily. This ordering might be useful for people looking for questions to answer, but it’s probably not the order we want to get an overview of the most interesting questions.

Fortunately, the Quora question page provides statistics that can help determine a question rank. Those statistics can be retrieved as follows.

First, we need the page HTML. For the All Questions page, I just saved the HTML manually from the browser, since it was just one page. But I’d rather not manually save 2000+ pages, especially since pages change over time.

There are many ways to download the HTML for a web page. A simple option in C# is:

using (WebClient client = new WebClient())

{

client.DownloadFile(url, filename);

}

With a loop inside the using statement, and a Thread.Sleep after each iteration (to avoid abusing Quora), I was able to get the HTML for each question page in my list. With the pages stored locally, I could then use the same XPath process described above to extract parts of the page. I started with these two elements:

'Question': {

'AnswerCount': './/div[contains(@class,

\'answer_count\')]',

'ViewCounts':'.//span[contains(@class,

\'meta_num\')]'

}

The div contains the number of answers that the question has. The span contains the number of views for a single answer, so the ViewCounts XPath returns a collection of integers for questions with more than one answer. Both of these numbers are a way to measure the level of interest that Quora users have in a question.

I found a couple of technical limitations in parsing the question pages:

- Some information (e.g., question follower count) is only available to logged-in users. Since my automated downloader is not logged in, I don’t have that metric available in the HTML. With some extra work involving cookies, there is likely a way to be logged in while running automation.

- Like the All Questions page, the question page has content that loads dynamically (using JavaScript) when the user scrolls down. Since my downloader doesn’t scroll or run JavaScript, it only retrieves the first six questions, and therefore the first six view counts. However, the answer count still reflects the actual number of answers, up to a limit of 100.

Despite these limitations, the two pieces of information provide a way to rank and categorize the full list of questions. Here are a few statistics from the data so far, focusing on the answer count (since the data for that metric is more complete):

- Only one question in the list has over 100 answers.

- About 100 questions have at least 10 answers.

- Over 1000 questions have at least 2 but fewer than 10 answers.

- About 800 questions have exactly 1 answer.

- The remaining 700 or so questions are unanswered.

I rounded those number because I haven’t studied the data closely yet, so it probably needs some cleanup. Next week, I’ll look for more questions, to see what percentage of the 20.1k total I can find through other techniques.